RoboCon 2026: Test Automation in the Era of AI

Are we ready for the mindset shift Abdelkader Hassine and Pavlo Ivashchenko present here?

Though I pretty much started my career with Robot Framework (RF), lately I’ve been exploring other territories of the DevOps continent. Participating in the annual RF conference RoboCon (12-13 February) was about rekindling an old flame, but ended up igniting a whole new bonfire. This year’s event was sparkling with AI — how it changes the way we write tests, run them, and the effect it has on learning of individuals and communities. The stage hosted one great talk after another, varying from new libraries to physically baking pancakes(!) as a metaphor for test automation. There was a bright spotlight on the wonderful community around this open source framework. In this blog post I decided to pass the torch from open source to any SW organization, and shed some light on how the presenters use AI for test automation. Here’s what stuck with me.

1. AI-generated Old Fashion Test Cases

Many Kasiriha presented the RF-MCP (Model Context Protocol server for RF) that gives AI agents a set of tools, almost like phases or steps, so they gain knowledge to generate RF test cases. RF-MCP enables you to specify which libraries to use and constantly execute the steps to check that they actually work. An important takeaway applies to AI-assisted coding in general: Ask the AI for a plan first — no code! — including stuff like which libraries it’s planning to use. Nudge it until the plan looks good, and only then ask for the implementation.

There was also a lightning talk by David Fogl on Agent Skills to generate Robot Framework test cases. Agent skills are a way to specialize the agent into certain workflows, practices and/or domains. Basically the skill is described in a file as context, rules and examples. The end result is similar as with RF-MCP, a good old deterministic test case.

Here I must jump back in time to the workshop day, which took place two days before the main conference. I attended a workshop by Krzysztof Żminkowski and Agnieszka Żminkowska about using a RAG (retrieval-augmented generation) solution for API testing. In the workshop RAG was used to provide a local LLM (large language model) with structured information about the API under test. Here, RAG enabled the LLM to figure out what to test. The test code generation part, how to test, relied on an open source LLM which had obviously skipped its RFCP training. An interesting experiment would be to complement RF-MCP with RAG.

In any case, RAG provides LLMs with any (project internal) information outside their learning data. As such, it can have many applications in the test generation process apart from the API example.

So the first approach is to use AI as a test case generator. You get a classic, repeatable test; you review and maintain it like any other automated test case.

2. AI As a "Manual Tester"

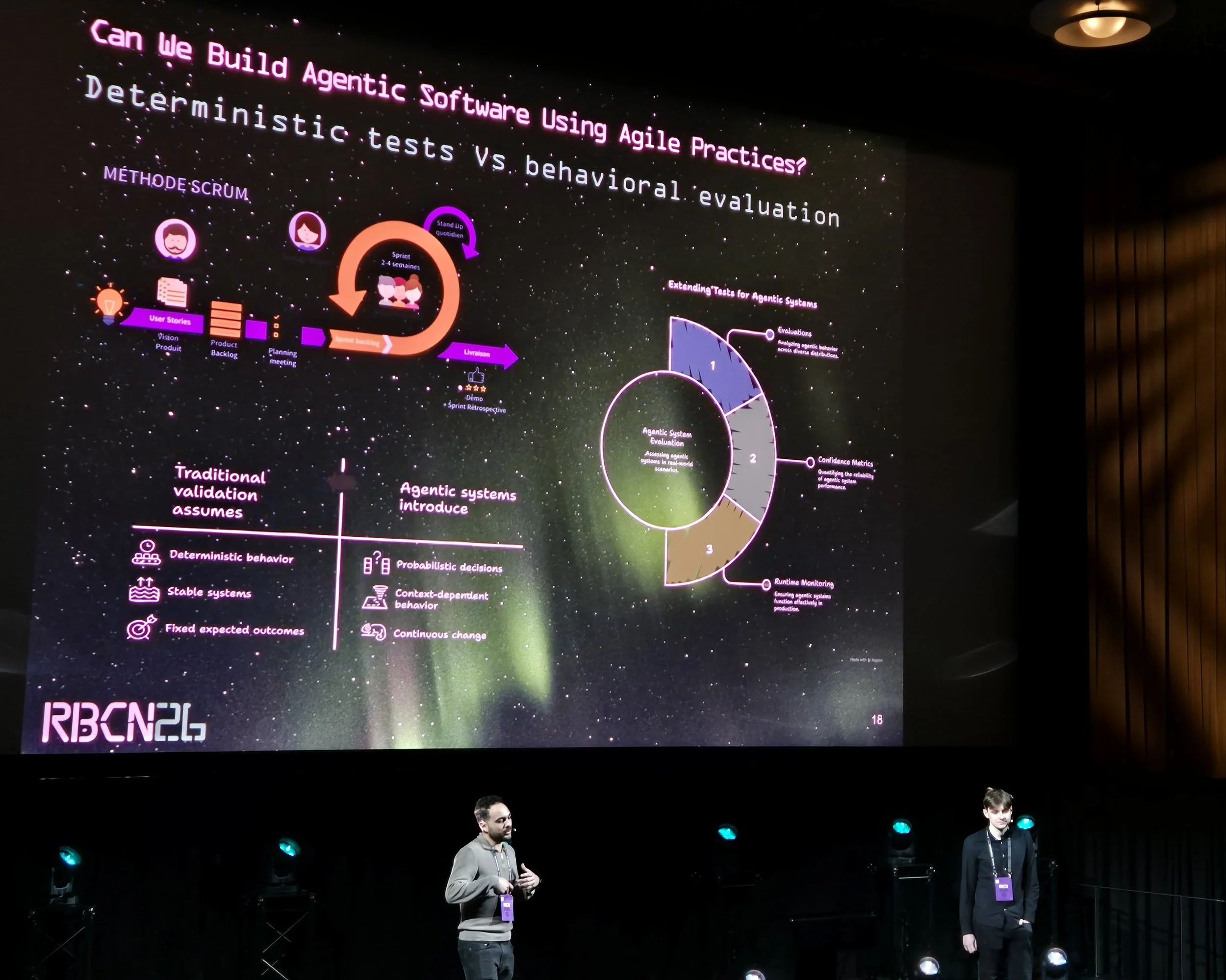

“What If Robot Framework Have a Brain” by Abdelkader Hassine and Pavlo Ivashchenko led us to another path: instead of writing the test case AI performs each step. The solution was mainly targeted towards GUI testing. Usual tools assert against the representation of the UI (DOM, locators, etc.); here they use GenAI to “understand” what’s on the screen and act on the perception. A big chunk of the talk was about using AI to find the right elements on the screen, with academic work and a benchmark to compare different solutions.

Using AI this way makes the test non-deterministic: every run can involve new AI decisions. Someone from the audience raised the obvious concern about predicting cost (tokens) and execution time for each run. I’d add that this would require a fundamental shift in testing mindset to allow non-deterministic test cases. On the upside, testing would get closer to what the user actually sees, similar to image recognition solutions. Compared to image recognition, AI might be less flaky when style or layout changes.

So the second approach is using AI as a runtime executor. Handy for “see and act like a user,” but you’ve got trade-offs in predictability and cost. If you're willing to adopt a new mindset with non-deterministic tests.

3. AI As a Regression Suite Planner

“Can AI help us find bugs in Robot Framework faster?” by Fabian Streitel was all about picking the most efficient test cases instead of running the whole shebang. The idea was to figure out which tests are similar or redundant with a word-embedding LLM, then run a smaller, high-value set. The solution was evaluated on the RF project itself with mutation testing: injecting artificial faults into RF core code and seeing if acceptance tests catch them. The mutation test results of the full suite were then compared to those of the selected set. It’s also possible to compare the code coverage between these test sets by instrumenting the code under test.

I think a natural next step would be using information about redundancy to refactor the test suites to have more effective test cases. This, however, brings up the trade-off between “test only one thing at a time” and “cut redundancy”.

Edin Tarić presented Medusa, a new tool for parallel test execution with Robot Framework. My takeaway: you can go further by combining parallel execution (Medusa or e.g. Pabot) with intelligent test case selection. So not just “hire more shovels” by running tests in parallel, but run the right tests.

So another role for AI is a curator and optimizer of what you actually run.

4. Building the Infrastructure and Practices

The theme that tied a lot of this together for me was that AI requires engineering practices. We’re not just asking one generic oracle for everything, instead we’ve got specialized agent skills, MCPs, and RAG. A lot of the craft is now about how to design the development environment — which AI tools you expose, how agents are tailored to adapt to your project, what makes up a good prompt, and how all of this is shared in an organization.

The Panel Discussion hosted by René Rohner, with Tatu Aalto, Many Kasiriha, David Fogl, Pekka Klärck and Arttu Taipale had some highlights for me. Working alone with AI can lead to suboptimal results because the model doesn’t push back on our decisions. We still need to talk through solutions with other developers. And because AI can deliver so much code so fast, code review fatigue is a real risk I’ve been thinking about.

5. Learning and Community

Underneath all of this, learning kept coming up. Miikka Solmela’s opening presentation "Community in the Age of AI" hit on it: As we’re moving from “we solve” to “I prompt” we need to keep learning visible and shared.

In his presentation "How AI tools affect learning and the implications on open source tools", Arttu Taipale talked about how retrieval of information is central to learning, and we’re handing more of that to AI. Organisations now have to explicitly plan how to train juniors; before it often “just happened” through tasks that are handled by AI nowadays. The panel discussion added that juniors might not know the right questions to ask. I’ve experienced that long before AI: when there’s a ton of information, we still need mentors and tasks that match where we’re at. In my point of view, continuous learning will be a challenge for seniors as well when AI is feeding us faster than we can chew.

My Five Takeaways

Generate deterministic test cases (e.g. RF-MCP, Agent Skills): AI writes the test; we run and maintain it like any other test case.

Execute steps with AI (“Robot Framework with a brain”): AI sees and acts; powerful but less predictable in cost, time and test results.

Select and optimize the test set: use AI to run fewer, more effective tests and to re-evaluate the suite; combine with parallel execution (e.g. Medusa) to speed things up.

Design the environment: MCP, agent skills, RAG, and smart prompting so AI helps us build tests in a controlled, reviewable way.

Work together: Keep your co-workers in the loop to share knowledge and get honest human feedback.

RoboCon 2026 laid a map in front of me: Whether “AI generates test code” or “AI performs test steps”, next stop is smarter test case selection. With proper tooling as our vehicle and knowledge sharing practices as our guide, a well-designed road infrastructure of AI tools smoothens the ride.